M365 Copilot responsible usage guidance

More than just technical deployment

Navigating the transition to AI-powered productivity requires more than just technical deployment; it demands a robust framework for security and trust. By aligning with internal compliance teams and establishing a clear communication strategy, organizations can ensure that M365 Copilot adoption is both innovative and secure.

As an individual keep on exploring, but…

Exploring AI tools individually is encouraged, but professional settings require strict security and compliance with regulations. At the start of M365 Copilot adoption, we suggest sharing the following guidance with the customer’s Cyber Security and Data Privacy teams for review.

Once approved, publish it on your Copilot SharePoint CoE (or equivalent), pin to Engage if used, include in Copilot update emails, and provide a one-pager at the beginning of all Copilot training sessions.

Building a foundation of trust and compliance

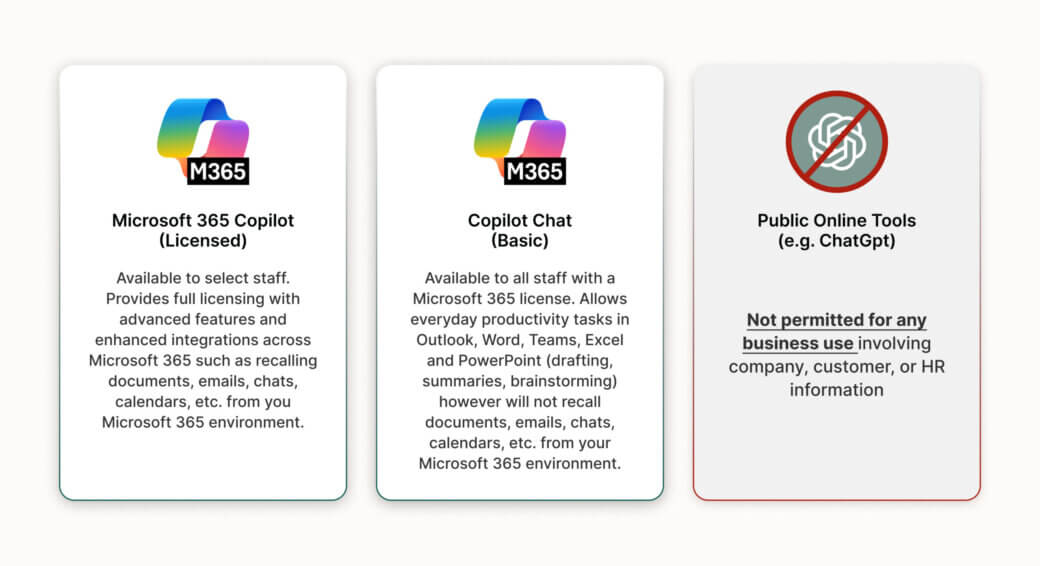

This will undoubtedly stop those who don’t understand why you are not allowing the use of public AI services like a free version of ChatGPT for working purposes. Additionally, it is essential to emphasize that responsible usage of M365 Copilot hinges on clear communication, ongoing education, and visible leadership endorsement.

By establishing a central resource, such as a Sharepoint Copilot Hub, and integrating relevant updates into regular communications, organizations enable a culture of security awareness and compliance. This approach ensures that all users understand the rationale behind Copilot policies, promotes consistent best practices, and reassures stakeholders that safeguarding sensitive data remains a top priority throughout the digital transformation journey.

From responsible AI principles to responsible Copilot in practice

Responsible AI for M365 Copilot is not only about following principles, but it is also about embedding those principles into architecture, governance, and everyday ways of working.

At Sulava MEA, we help customers operationalize Microsoft’s Responsible AI guidance through a practical and business-focused approach:

- Responsible-by-design foundation We validate identity, access, and data boundaries (Entra ID, sensitivity labels, Purview, least-privilege access) to ensure Copilot only reasons over authorized content.

- Governance and policy enablement We co-create acceptable use policies, human-validation scenarios, and data handling guidance, and publish them via a Copilot Hub / CoE.

- Human-in-the-loop adoption model Copilot is positioned as an assistant, while people remain accountable for final decisions and outputs.

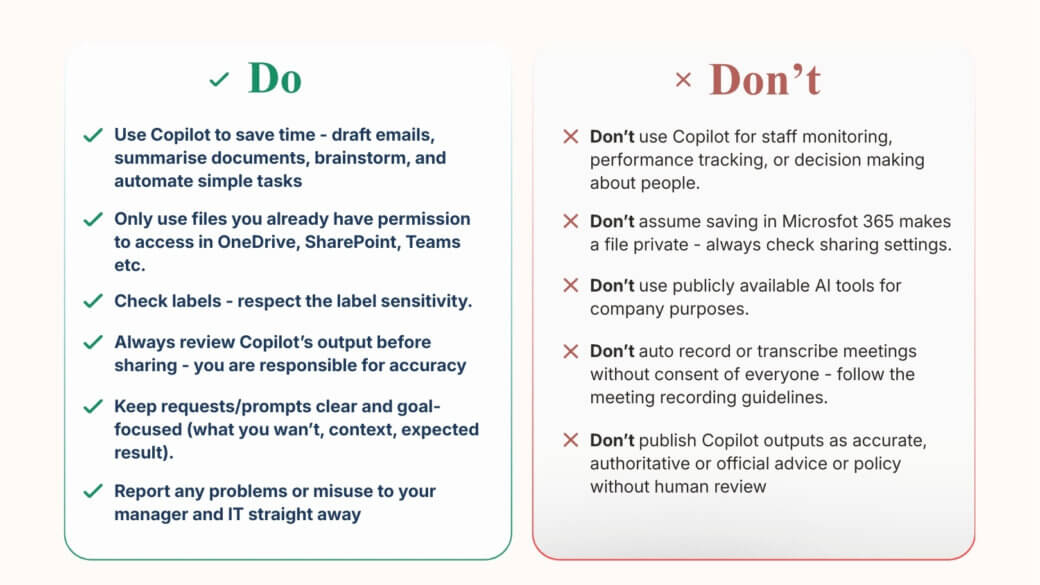

- Responsible prompting and usage patterns We provide curated prompt examples and do’s and don’ts that reduce hallucinations, encourage verification, and prevent sensitive data exposure.

- Continuous improvement Responsible usage is reviewed regularly through feedback loops, security collaboration, and alignment with Microsoft’s evolving roadmap.

Do’s and don’ts of responsible AI transformation

M365 versus online tools